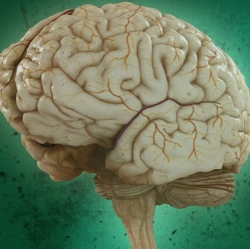

The researchers used fMRI to view how the brain encodes various thoughts (based on blood-flow patterns in the brain). They discovered that the mind’s building blocks for constructing complex thoughts are formed, not by words, but by specific combinations of the brain’s various sub-systems.

Following up on previous research, the findings, published in Human Brain Mapping (open-access preprint here) and funded by the U.S. Intelligence Advanced Research Projects Activity (IARPA), provide new evidence that the neural dimensions of concept representation are universal across people and languages.

“One of the big advances of the human brain was the ability to combine individual concepts into complex thoughts, to think not just of ‘bananas,’ but ‘I like to eat bananas in evening with my friends,’” said CMU’s Marcel Just, the D.O. Hebb University Professor of Psychology in the Dietrich College of Humanities and Social Sciences. “We have finally developed a way to see thoughts of that complexity in the fMRI signal. The discovery of this correspondence between thoughts and brain activation patterns tells us what the thoughts are built of.”

The researchers used 240 specific events (described by sentences such as “The storm destroyed the theater”) in the study, with seven adult participants. They measured the brain’s coding of these events using 42 “neurally plausible semantic features” — such as person, setting, size, social interaction, and physical action (as shown in the word clouds in the illustration above). By measuring the specific activation of each of these 42 features in a person’s brain system, the program could tell what types of thoughts that person was focused on.

The researchers used a computational model to assess how the detected brain activation patterns (shown in the top illustration, for example) for 239 of the event sentences corresponded to the detected neurally plausible semantic features that characterized each sentence. The program was then able to decode the features of the 240th left-out sentence. (For “cross-validation,” they did the same for the other 239 sentences.)

The model was able to predict the features of the left-out sentence with 87 percent accuracy, despite never being exposed to its activation before. It was also able to work in the other direction: to predict the activation pattern of a previously unseen sentence, knowing only its semantic features.

“Our method overcomes the unfortunate property of fMRI to smear together the signals emanating from brain events that occur close together in time, like the reading of two successive words in a sentence,” Just explained. “This advance makes it possible for the first time to decode thoughts containing several concepts. That’s what most human thoughts are composed of.”

“A next step might be to decode the general type of topic a person is thinking about, such as geology or skateboarding,” he added. “We are on the way to making a map of all the types of knowledge in the brain.”